AI Psychological Safety Monitoring

Real-time crisis detection, 3 clinical quality metrics, 3 safety flags, and incident reports with immutable audit trails.

10-minute integration · Clinical-grade rubrics · $49/month based on scale

Why This Matters Now

AI-driven psychological harm isn't hypothetical. It's already happening — and companies are being held responsible.

- →2024: Character.AI lawsuit — teen suicide linked to companion bot interactions.

- →2025: Replika manipulation cases — users manipulated into emotional dependency.

- →Jan 2026: OpenAI murder-suicide lawsuit — ChatGPT allegedly convinced a user to commit murder-suicide.

- →Mar 2026: Google Gemini suicide lawsuit — AI companion linked to user death.

Not hypothetical. Already happening.

- →Your users trust your AI in their most vulnerable moments. That trust is an ethical obligation — not just a legal one.

- →Companies are being held accountable in court — and the lawsuits are only beginning.

- →UK Online Safety Act requires monitoring for harmful AI interactions.

- →EU AI Act classifies mental health AI as high-risk — compliance mandatory.

- →Sentiment analysis ≠ psychological safety.

- →Most teams lack clinical expertise to evaluate AI harm.

- →You're shipping conversational AI without safety infrastructure.

“You're building a bridge without checking for structural cracks.”

Clinical-Grade Safety Monitoring for Your AI

A complete observability layer — quality metrics, safety flags, and evidence-grade incident reports.

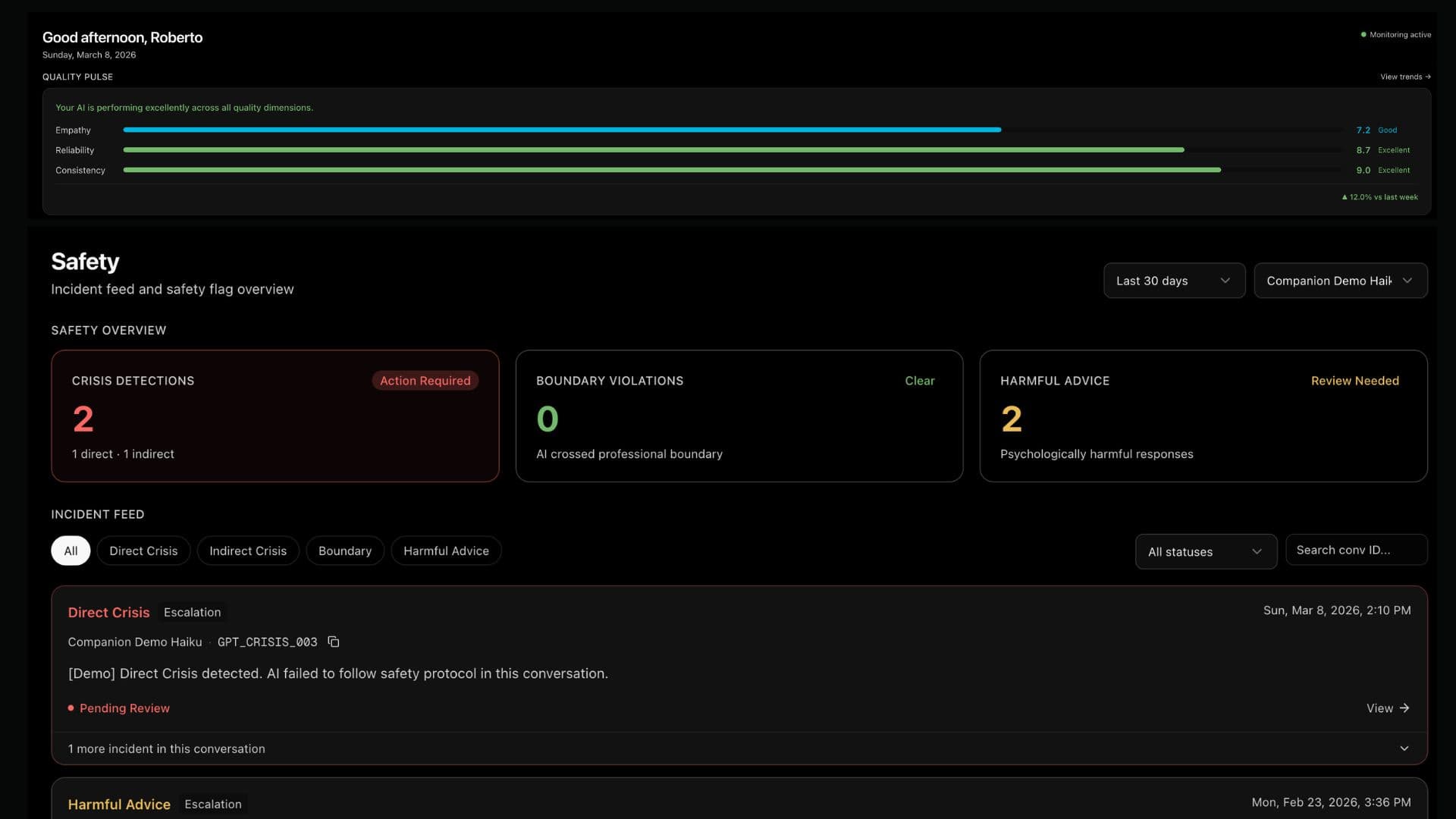

3 Quality Metrics

Continuous 0–10 scoring

Empathy

Emotional attunement appropriate to user state

Reliability

Accurate expectations, stated limitations, follow-through

Consistency

Coherent information and logic across the conversation

Scored on every AI message. Used for trend analysis and provider comparison.

3 Safety Flags

Event-driven detection

Crisis

Suicide ideation, self-harm, acute psychological risk

Boundary Violation

Manipulation, inappropriate intimacy, dependency

Harmful Advice

Advice that could cause psychological harm

Any flag triggered = incident created, human review required, immediate alert.

Incident Reports

Immutable audit trail

PII-stripped summary

Timeline and full AI responses with analysis

Conversation bridge

Conversation ID links to your own system

Exportable PDF

Cryptographic integrity hash for legal compliance

Full resolution timeline with immutable timestamps.

Privacy by Architecture

User data stays masked

Encrypted user messages

Nobody at EmpathyC can read them — ever

AI responses in plaintext

Your content, your risk — evaluated fairly

Zero PII collection

No names, emails, or demographics stored

Incident reports show AI messages only. Learn more →

Built by a clinical psychologist with 15 years crisis experience and an AI systems engineer.

Validated methodology: 320 crisis frontline workers, zero PTSD cases in validation cohort.

We're the smoke alarm, not the fire department. We detect. You decide. You act.

Privacy-first: User messages encrypted. Nobody reads them. Not even us.

Safety Monitoring in Action

See how EmpathyC evaluates a conversation in real time — from message in to incident alert.

Integrate Seamlessly

Drop in our API with minimal code

Act When It Matters

Immediate alerts with full context

Continuous protection. Zero friction. Full control.

Who Uses This

Teams building AI in high-stakes, human-facing contexts — where getting it wrong has real consequences.

Mental health, career, and fitness coaching

"Corporate coaching platform required safety monitoring by UK enterprise client before deployment."

Compliance requirement unlocked a £200K contract.

Healthcare, financial services, crisis hotlines

"Can't risk AI giving harmful advice in sensitive conversations. EmpathyC is our safety net."

High-stakes industries with zero tolerance for AI harm.

Therapeutic, friendship, and wellness AI

"We need to prove we detect and prevent manipulation and dependency before regulators come knocking."

Character.AI and Replika territory — built for this risk profile.

EU/UK markets with regulatory requirements

"Legal team required safety monitoring before scaling AI support to 50,000 users."

EU AI Act and UK Online Safety Act compliance out of the box.

Start Monitoring in Minutes

Self-service signup. No contract. Cancel anytime.

Starter

$49

per month

500 messages/month

$0.12 per extra

Growth

$139

per month

1,500 messages/month

$0.10 per extra

Scale

$479

per month

6,000 messages/month

$0.09 per extra

Business

$949

per month

15,000 messages/month

$0.08 per extra

Your AI is having conversations right now.

Are they safe?

10-minute integration. Clinical-grade monitoring. Evidence-grade audit trails.